Loggly - Responsive Log Management

Cisco recently announced they have invested $10M into Loggly, a Cloud-based log management service. I had vaguely heard of Loggly before, but never properly investigated it. Let’s dive in and find out: What is Loggly, how does it work, why/where would I use it, and what other options do I have?

What is Loggly?

Loggly helps cloud-centric organizations—organizations that build and manage cloud-facing applications—to solve operational problems faster. Our solution analyzes log data from virtually any application, system or platform to answer your burning questions

Loggly is a cloud-based log management application. Submit your logs via a variety of means to Loggly’s systems, and now you can query and view all your logs in one place, in real-time. Sweet. Create graphs from that data, parse out specific events, alert when certain patterns are seen, etc.

If you’ve ever had to log into 10 different devices to find the relevant logs, you’ll appreciate the need for centralised log management. If you’ve ever spent hours crafting massive command lines involving multiple grep. sed & awk statements, you’ll love fast powerful searching. If you’ve had to take CSV files and turn them into charts using Excel, you’ll love being able to just send someone a link to run a real-time report.

How Does it Work?

To use Loggly, first sign up for an account, and then start forwarding data to Loggly, from whatever source you’ve got. You can submit data via various sources such as syslog, TCP/TLS, HTTP calls, etc. When submitting data, you include an access key that associates the data with your account. Here’s the steps I went through to get some Apache logs from an AWS EC2 instance submitted to Loggly:

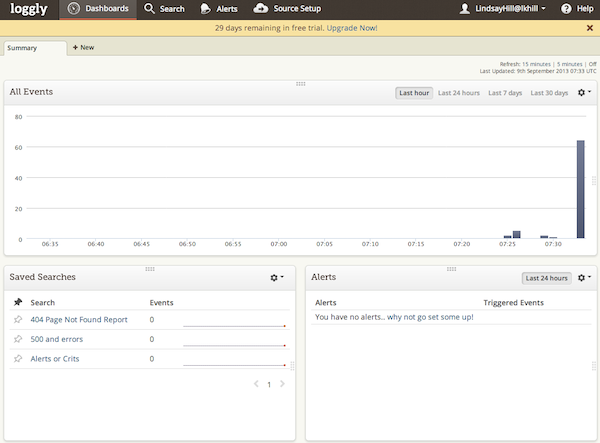

- Sign up with Loggly. Go to loggly.com, and sign up for a free trial. After doing that, I’ve got a blank slate - no log entries yet:

Empty Loggly - no logs yet

- Configure rsyslog to send logs to Loggly. The test EC2 instance I’m using is a Linux box running rsyslog, so I follow the instructions provided for configuring rsyslog:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

# wget -q -O - https://www.loggly.com/install/configure-syslog.py | python - install

*************************************************************

****** Starting Loggly Syslog Configuration Script ******

*************************************************************

Python version check successful: Installed version is 2.6. Minimum required version is 2.6

Reading environment details....

Installation started

Operating System: Red Hat Enterprise Linux Server-6.4(Santiago)

Syslog versions:

1. rsyslog(5.8)

Performing sanity check....

Sanity Check Passed. Your environment is supported.

Configuring rsyslog-5.8

Reading Loggly credentials from user....

Enter the username that you use to log into your Loggly account.

Loggly Username [root]: LindsayHill

Password for LindsayHill:

Enter your Loggly account name. This is your subdomain. For example if you login at mycompany.loggly.com,

your account name is mycompany.

Loggly Account Name [LindsayHill]:lkhill

This system is now configured to use "11111111-aaaa-4444-aaaa-eeeeeeeeeeee" as its Customer Token.

Reading configuration directory path....

Reading default configuration directory path from (/etc/rsyslog.conf).

The Loggly Syslog Configuration Script will create a new configuration file /etc/rsyslog.d/22-loggly.conf

Creating configuration file at /root/22-loggly.conf

SELinux is on. Please disable it and restart rsyslog daemon manually.

If you wish to use a different Customer Token, replace 11111111-aaaa-4444-aaaa-eeeeeeeeeeee

with the token you wish to use, in the file /etc/rsyslog.d/22-loggly.conf.

Installation completed

*************************************************************

****** Finished Loggly Syslog Configuration Script ******

*************************************************************

I had to fix the SELinux permissions on /etc/rsyslog.d/22-loggly.conf. I think it is just lazy of the developers to say “Disable SELinux”, when all I had to do was set the context to system_u:object_r:etc_t. After making those changes, and running service rsyslog restart, we were sending logs to Loggly via Syslog over TCP.

-

Configure rsyslog to read Apache logs. The previous configuration was only forwarding regular syslogs. I also needed to configure rsyslog to read my Apache access logs. I used these instructions to get rsyslog to monitor

/var/www/httpd/access_log. After making those changes, and restarting rsyslog, my web logs were being sent to Loggly. -

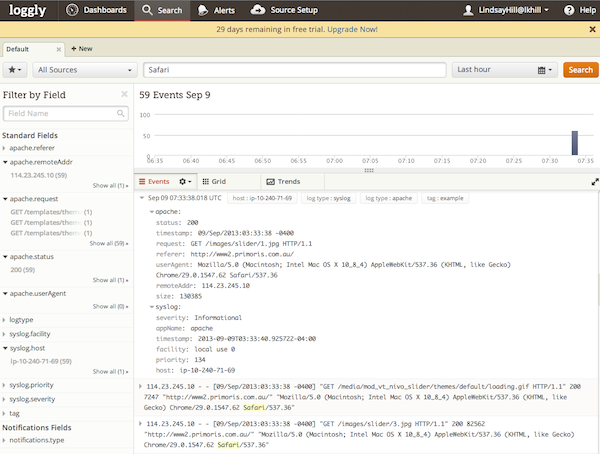

Profit! View and search my logs in near real-time: Sure enough, logs started appearing in Loggly, and I could run quick searches:

Loggly - logs starting to come through

Loggly - running a search

Note that it automatically picks out various fields in the data, such as apache.status - I didn’t configure any of that, it automatically detected the log format.

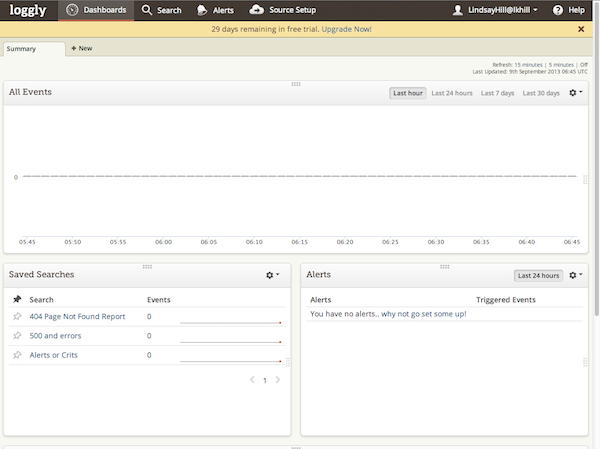

Now I can continue regularly submitting data, and search through it whenever I want, for whatever patterns I want. It starts to get interesting when I can start seeing trends in the data, or set up alerts that tell me when certain patterns are seen.

After collecting data for a few days, I had a bit more to work with. But Loggly was still super-quick at running queries. I could now start trying out a few more of the visualisations - graphs, charts, etc. I haven’t dug into the Query Language deeply enough yet to know if it can do everything I want, but the basics are well covered. Tools like this really come into their own when you build up a complex query, and then save it - next time it’s as simple as picking a bookmark, and you can see current results.

Why/Where Would I Use It?

Loggly says that anyone who has text-based logs, either structured or unstructured, can use it. They support many methods for submitting data, but they don’t have agents, and some of the log submission methods will need application changes. These are the characteristics I see in the target customers:

-

Developed Custom Cloud-based web application

-

Allergic to hosting your own systems or applications

-

Love OpEx, Hate CapEx

-

Need alerting info from application log data

-

Don’t care about what happened two weeks ago - just care about now

When I look at that list, I think: Complete opposite of traditional organisations. There might be some traditional organisations that would benefit from Loggly, but I think the target market is very much hip new Web 3.11 companies. Nothing wrong with that, and you may find a place for it in your staid company. But you should be very clear on what it is, and what it isn’t.

Pricing

Loggly is very upfront with their pricing. Pricing is based upon your daily log volumes, and the length of time you want to keep the data. There’s also a free tier.

-

Lite: 200MB/day, 7-day data retention. Free

-

Development: 1GB -> 10GB/day, 7-day data retention. $49/month -> $374/month

-

Production: 7GB -> 150GB/day, 15-day data retention. $349/month -> $7,400/month.

Caveats and Alternatives

It’s not a perfect solution. Retaining data for a maximum of 15 days means that it’s only really useful for near real-time analysis and alerting. It can’t be used for long-term capacity planning, or where logs need to be kept for audit purposes. Most people never go back more than a couple of weeks in their logs anyway, so that may not be a big deal. It depends on your application. This will probably be the critical decision in evaluating Loggly: How long do you need to keep your data?

Storing all data off-site may be a problem for some types of data, and some jurisdictions. But if it’s just fault analysis for your simple web app, who cares? Not a problem. At least you can try out Loggly at a low cost, and if it doesn’t work out, then you can investigate other tools.

Using existing logging applications such as rsyslog or log4net is both good and bad. It’s great that you can just use your existing tools, but it does lead to some limitations. You may want a dedicated logging agent if you have complex needs for forwarding data. For most common needs, Loggly’s variety of input methods will be fine.

Some of the alternatives out there:

-

Splunk: The Big Daddy in this field. Forwarders, on-site storage, distributed search and indexing, enormous number of pre-written apps, huge capabilities for taking in data, and displaying it in whatever format you want. A fantastic tool, but with a price tag that can best be described as “reassuringly expensive.”

-

Splunk Storm: A cloud-based version of Splunk, with some limitations, but much clearer pricing.

-

vCenter Log Insight: VMware’s foray into log management. Hard to say if they’re committed to it or not. Will they just toss it aside like other things they’ve tried, or are they serious about this?

Anyone else notice that all of these products have a vaguely similar look and feel? I guess there’s some common requirements, and only so many ways of presenting the data.

Why Did Cisco Invest In Loggly?

I haven’t quite figured that out yet. Cisco has enormous budgets, so $10M is a small amount for something that may or may not pan out. I don’t know what their longer-term plans are, but I do wonder if this could somehow be tied to the Meraki acquisition? Combine cloud-based management with cloud-based log management? I don’t know.

Conclusion

Loggly looks like a pretty interesting log management solution, particularly if your app is entirely cloud-based. It’s not suitable if you need long-term log analysis and storage, or if you need to keep your data on-site. But if you’re looking for fast, simple log analysis and alerting for cloud-based services, it’s certainly worth a look. It’s not cheap, but at least you can see exactly what the costs are going to be, and you can try it for free.